How Does Live Streaming Work? A Beginner’s Guide

by Rafay Muneer, Last updated: May 12, 2026, ref:

Live streaming is the real-time broadcast of video and audio over the internet to viewers anywhere in the world. From the viewer's side, it looks simple: load the page, press play, watch. From the broadcaster's side, a chain of technical processes runs every second to make that experience work. Capture, encoding, segmentation, transcoding, content delivery network distribution, and decoding on the viewer's device. Each step has to function correctly, in sequence, in real time, or the stream fails.

This guide breaks down what actually happens during a live stream. We cover the six components every live stream needs, the streaming protocols that move data between them, the technical process from camera to viewer, and how enterprise platforms handle the parts production teams should not have to think about.

By the end, you should understand what a working live streaming setup looks like, where failures typically originate, and what to look for when evaluating live streaming infrastructure for your organization.

How Does Live Streaming Work: An Overview

Before we dive into the technical inner workings of live streaming, let’s take a step back to understand what live streaming means.

Streaming video, whether live or on-demand, takes much longer than any other form of media streaming. This all comes down to the way video works in general. A video is a series of frames stitched together along with accompanying audio. Higher resolutions, higher bitrates, and longer runtimes mean that the video will be much larger in file size and take much longer to stream.

But there's another side to it. When you stream video content, you also have to take into consideration the encoding and decoding processes that will be happening to make the video delivery possible.

When it comes fresh off a camera, video content is still in raw form and cannot be streamed effectively. Modern encoders and transcoders are designed to process video streams, compressing and converting them into formats like H.264 or H.265 that are optimized for online delivery.

Think of it like reading a book. It's not just enough to have all the words in the right order. It would be best to have punctuation and formatting to help your brain parse the text more efficiently. For streaming, the encoding process adds the 'punctuation' to the video data, making it easier for devices to interpret and display the information correctly.

Once the stream is encoded, it’s sent through a content delivery network (CDN), that powers global live video distribution. The CDN stores and replicates your live stream across multiple edge servers, ensuring low latency, minimal buffering, and reliable playback for audiences worldwide.

Lastly, efficient live stream distribution ensures the encoded data reaches the viewer quickly, where it’s decoded and played on their device without buffering

What is Live Video Streaming?

You may already be familiar with live video streaming. If not, we recommend checking out this blog post on what live streaming is and why you should use it. However, let's brush up on the basics, just in case.

Quite simply, live streaming is the process of broadcasting media, in this case video and audio, to viewers in real time over the internet. This is different from streaming an on-demand video because the content is not pre-recorded and cannot be edited or modified. The video is played almost as soon as it's broadcast (give or take a few moments of delay).

What Live Streaming Is Used For

Live streaming serves a wide range of organizational needs. Companies use it for executive town halls and all-hands meetings, when leadership communicates to the entire workforce at once. Training and L&D teams use it for instructor-led sessions reaching distributed employees. Event teams use it for product launches, customer summits, and partner events that need to reach audiences beyond a venue's physical capacity. Marketing teams use it for webinars and brand events that generate engagement during the live broadcast and continue to perform as on-demand content afterward.

For most enterprise use cases, the broader format is webcasting, which is a structured, often produced live broadcast intended for a large one-way audience with authentication, audience controls, and on-demand archiving. For more depth on that distinction and how webcasting differs from generic live streaming, see our guide to webcasting.

What Components Are Needed to Make a Live Stream Work?

Here's a question: How does live streaming work to deliver video playback in real-time when the viewer is watching from halfway across the world? Intuitively, it may sound a bit confusing, given that large amounts of video data have to be transmitted seamlessly.

Luckily, advancements in live streaming technology have helped ensure that high-resolution video can be streamed in real-time to audiences across the world. Let's delve into the components that work together to make this real-time experience possible:

Audio/Video Source

Your audio and/or video source is the content you'll be capturing and streaming over the internet. It can be a video camera filming a live event, a webcam for a personal stream, or even a screen capture of your computer for an educational stream.

This source generates the raw audio and video data that will be the foundation of your live stream. The keyword here is 'raw' since it has to go through processes before it can be turned into a streamable format.

Encoding

Remember the raw format we just talked about? Encoding is the process that helps 'refine it.' It does this in two major ways. The first is simply converting the raw video input into a more manageable digital form through the use of codecs. This is done to help with the compatibility of the video and make it playable across a range of devices and browsers.

The second part is compression, where the file size of a video is reduced by getting rid of redundant frames and stitching the remainder of the video back together. This helps lower the bandwidth required to stream video content and the storage space requirements of the CDN.

Transcoding

Even in the best of circumstances, live streaming can be prone to delays, buffering, and occasional stops in playback. A broadcaster can go live under the most ideal conditions, but they can't account for the less-than-ideal internet quality of viewers tuning in for that stream.

That's where transcoding comes in. Transcoding creates several renditions of the video in several bitrates and resolutions. This is essential to help make adaptive bitrate (ABR) streaming possible, where the video is switched to a lower quality when internet speeds drop rather than stopping the stream entirely.

There's a lot more to learn about adaptive bitrate streaming. Check out our detailed blog post on what ABR is and how it works to know more.

Live Streaming Protocols

Live streaming protocols dictate how the encoded audio and video data are packaged, transmitted, and received to ensure a smooth and synchronized playback experience. The live streaming protocols provide the instructions necessary for encoding, transcoding, and adaptive bitrate processes.

Think of these protocols as the code that defines the packaging rules, optimizing, transmitting, and streaming data to the end user. There are several different streaming protocols out there. Each of them works a certain way and has advantages and drawbacks. We'll discuss these in a bit.

Content Delivery Network (CDN)

A content delivery network is a network of geographically dispersed servers that take content from a single origin server and cache it on multiple edge servers so that it can be served to users across the world more efficiently and without the drawbacks of latency.

Say you're a viewer in Japan trying to stream a live video broadcast from the US. Normally, you'd experience high latency, meaning a significant delay as the video data travels a vast distance. This translates to constant buffering and frustrating start-and-stop playback as your video player struggles to keep up with the data stream from the origin server.

With a CDN, however, the scenario changes dramatically. A CDN might have an edge server located in Japan or a nearby region. This geographically closer server would hold a cached copy of the live stream, significantly reducing the distance the data needs to travel to reach your device.

Streaming Server

In a live streaming setup, the streaming server acts as the middleman between the encoder and the content delivery network (CDN). It receives the encoded audio and video data stream from the encoder and prepares it for delivery. This is also where live streaming protocols work their magic to produce video segments of different renditions necessary for adaptive bitrate streaming (ABR).

A streaming server can either be set up on-premises for organizations that require greater control over their data or hosted on cloud infrastructure for those that want to deploy with lower upfront costs and want to keep future-proof scalability in mind.

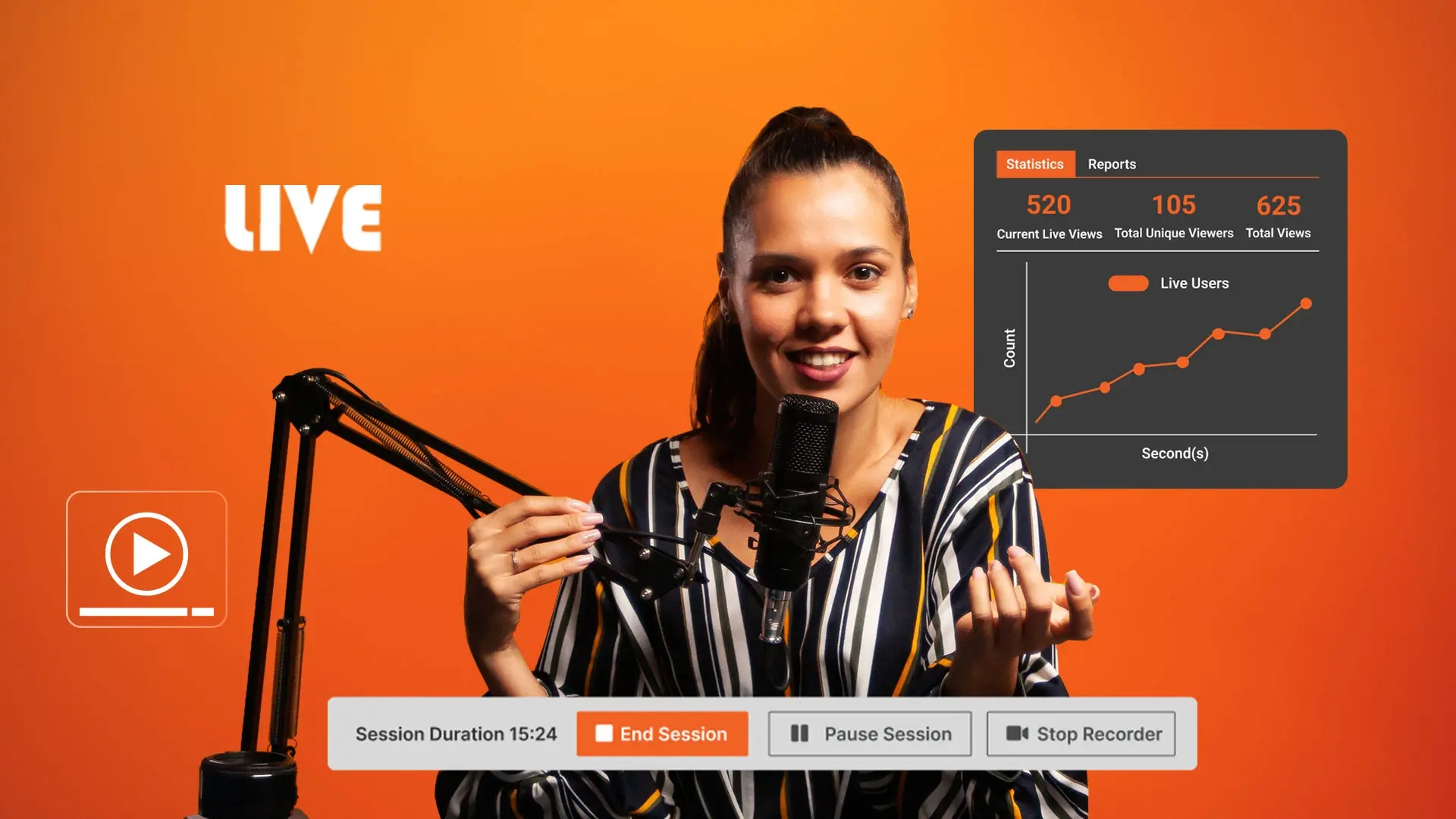

Live Streaming Platform

A live streaming platform is the final hub where everything comes together. It has all the functionalities needed to manage your live broadcast, connect with your audience, and deliver your content seamlessly.

Often, live streaming platforms will allow you to integrate external encoders and CDNs to make the process a bit simpler. Some platforms will even function as the streaming server by ingesting the encoder feed and pushing it out to their integrated CDNs.

Besides this, live streaming platforms come with additional features like analytics to keep track of the live stream and how it's being viewed, as well as interactivity options like live chats, FAQs, and social media feeds to engage viewers.

Live Streaming Protocols Used in Live Video Streaming

As discussed earlier, streaming protocols define how data is packaged, transmitted, and received to ensure a smooth and synchronized playback experience.

There are different kinds of streaming protocols out there, and they work in different ways, which makes them suitable for diverse applications. Depending on your priorities, you may find certain live-streaming protocols more suitable than others.

With that said, here are some of the most common protocols used for live streaming video content to viewers across the world.

Real Time Messaging Protocol (RTMP)

RTMP (Real Time Messaging Protocol) was developed by Macromedia in the early 2000s for streaming video, audio, and data between a Flash player and a server. Adobe acquired Macromedia in 2005 and opened the specification publicly in 2009. Flash Player itself was retired in December 2020, but RTMP outlived it. The protocol moved from being the delivery layer to the ingest layer, and remains the dominant ingest protocol used by encoders worldwide in 2026.

In a modern live streaming pipeline, RTMP carries the encoded feed from the encoder (hardware or software) to the streaming platform. The platform receives the feed, transcodes it into multiple HLS or DASH renditions, and delivers those to viewers. RTMP no longer reaches the viewer's device; HLS and DASH replaced it for delivery after the Flash era ended.

RTMP runs on TCP port 1935 by default (1.443 for the secure variant, RTMPS), splits the stream into chunks, and interleaves audio, video, and data. The reason it remains the ingest standard is ecosystem entrenchment: nearly every encoder on the market, including Teradek, OBS Studio, vMix, AJA, and Wirecast, defaults to RTMP. For a deeper look at how RTMP fits the modern ingest pipeline, see our [guide to what RTMP is](https://enterprisetube.com/blog/what-is-rtmp).

Real-Time Streaming Protocol (RTSP)

RTSP (Real Time Streaming Protocol) was developed in 1998 in collaboration with RealNetworks, Netscape, and Columbia University. RTSP is a control protocol. It manages the streaming session (play, pause, stop) but does not carry the media itself. The actual media transport is handled by companion protocols like RTP (Real-time Transport Protocol) and SRTP (Secure Real-time Transport Protocol) for encrypted delivery.

In enterprise environments, RTSP is most commonly seen in IP camera deployments and surveillance systems rather than mainstream live streaming workflows. It can be tunneled over TLS for encryption (RTSP-over-TLS), but enterprise live streaming has largely consolidated around RTMP for ingest and HLS or DASH for delivery.

HTTP Live Streaming (HLS)

HLS was developed by Apple in 2009 as a proprietary streaming protocol to replace its QuickTime Streaming Server (QTSS). Currently, it sits as the de facto standard for streaming audio and video content.

The popularity of HLS is rooted in its ability to stream over HTTP, which allows it to be streamed directly within web browsers. This makes it compatible with a wide range of devices. What's more, it includes support for adaptive bitrate streaming (ABR).

If HLS has a drawback, it's that it has a relatively high latency when compared to other streaming protocols. Luckily, Apple continually brings updates and improvements to HLS and has even created a low-latency variant of HLS called Low Latency HLS (LL HLS).

Despite the high latency, HLS continues to be a popular choice for video streaming due to its impressive capabilities. In fact, some legacy streaming protocols, such as RTMP ingest or FFMPEG conversion in the case of RTSP, continue to exist today thanks to their conversion to HLS.

Dynamic Adaptive Streaming Over HTTP (MPEG-DASH)

MPEG-DASH (Dynamic Adaptive Streaming over HTTP) was developed by the Moving Picture Experts Group and published as an ISO/IEC standard in 2012. Like HLS, it delivers video over HTTP using adaptive bitrate streaming, but unlike HLS it is codec-agnostic and was designed from the start as an open international standard rather than a vendor-led specification.

DASH and HLS coexist today as the two dominant delivery protocols. Most enterprise streaming platforms package the same source content in both formats, then deliver whichever the viewer's player requests. Safari historically had less native DASH support than other browsers, but Media Source Extensions (MSE) and HTML5 video players (like dash.js, Shaka Player, and Video.js) handle DASH playback in Safari today. For enterprise platforms supporting both protocols, the choice between HLS and DASH happens at the player level, not as a constraint on the broadcaster.

Web Real-Time Communication (WebRTC)

For enterprise live streaming workflows, the practical protocol stack is: RTMP or RTMPS for ingest from encoder to platform, HLS or DASH for delivery to viewers, and WebRTC for ultra-low-latency interactive use cases like meetings. The streaming platform handles the protocol decisions automatically.

Broadcasters configure their encoder to push RTMPS to the platform, and viewers' players negotiate HLS or DASH based on their browser and device. To go deeper on any specific protocol, see our guides on [what RTMP is](https://enterprisetube.com/blog/what-is-rtmp) and the [enterprise CDN architecture that distributes the resulting streams](https://enterprisetube.com/blog/cdn-video-streaming).

The Live Streaming Process Explained In Depth

Now that we've mastered the fundamentals of live streaming, we can finally address the burning question: How does live streaming work on a technical level?

Believe it or not, despite all the technicalities and minor details involved, the live-streaming process can actually be fairly straightforward to understand. So, without further ado, let's find out the details of what makes a live stream tick.

Feed Capture

A live stream starts with capturing the raw audio and video data, often referred to as the "feed." The capture process depends on the type of audio/video source you're using.

In a very typical scenario, the light from the scene hits the digital camera sensor. The resulting image then undergoes an analog-to-digital conversion. At this point, even though the signal output is in a digital format, it is still considered 'raw' due to its large size and unoptimized state. This raw digital data isn't ready for efficient transmission over the internet for live streaming.

Encoding and Compression

After the recording device outputs the video feed, it is sent to the encoder for further processing. Encoders can come in different shapes and sizes, from dedicated hardware to software and even cloud encoders.

As we discussed earlier, this is the step where the video feed is made a bit more 'manageable' for transmission and streaming. The encoder does this in two concurrent steps: encoding and compression. The encoder processes the video frames in chunks called macroblocks, i.e., 16x16 displayed pixels.

In order to reduce the file size of the raw video, the encoder packages it with the help of an encoder. Common encoders for live streaming include H.264, H.265, VP9, and AV1. The way each encoder compresses data depends on the specific encoder.

For instance, H.264 uses a combination of different compression techniques like interframe compression, motion compensation, quantization, and entropy coding. These techniques utilize different processes, but the end goal is to reduce the amount of data without making changes that drastically affect the quality noticeably.

Here's an example. Say you have a live stream of a presenter against a static backdrop. The presenter may move around during the stream, but the backdrop will remain the same and won't require individual rendering for each frame.

Here's a breakdown of how common compression techniques work:

Interframe Compression

This technique analyzes the differences between consecutive video frames. Instead of repeatedly storing entire frames, only the changes between frames are saved. This is highly effective for videos with minimal scene movement, as less data is needed to represent the changes.

Motion Compensation

Building upon interframe compression, this technique analyzes camera movement within the video. Instead of storing entire frames with slight object movement, the encoder calculates the motion and stores only the information needed to adjust the position of objects within the previous frame.

Quantization

Quantization works on a technical level to reduce the data needed to represent the video. Instead of storing exact values of wide ranges of data, they are scaled and rounded down to simpler, smaller values.

Entropy Coding

Entropy coding recognizes patterns in video data. The more frequently occurring patterns are represented by more bits, and the less frequently occurring patterns are represented by fewer bits.

Of course, several other techniques depend on the specific codec being used. The other part of this process, the encoding itself, helps the video be compatible with a variety of devices and ensures that playback is supported. To play it, the user's device will need to 'decode' it again.

Segmentation

Streaming through modern protocols like HLS and MPEG-DASH requires the delivery of chunks of video that can be sent to the video player to ensure efficient streaming. Segmentation involves dividing the encoded video stream into smaller chunks of data called segments. These segments typically range from a few seconds to ten seconds in length. (The length ultimately depends on the specific streaming platform and encoding settings.)

Transcoding

We discussed the importance of adaptive bit rate (ABR) streaming earlier and how it helps ensure a seamless streaming experience for the user. Transcoding is a critical process that makes ABR possible.

This step aims to create multiple renditions of the video with different bitrates and resolutions. These processes are called trans-rating and trans-sizing, respectively. Here are the steps involved in a typical transcoding process:

De-Muxing

First, the transcoder analyzes the encoded file segments to locate the video and audio streams. Once identified, they are separated into individual components for processing. This separation is necessary because audio and video streams undergo different compression processes.

Decoding

Next, the transcoder decodes the separated video stream into an intermediary format like YUV or RGB. It reverses the encoding processes, such as inverse quantization, to recover the original pixel values for each frame. This reconstructs the video.

Post-processing

This is the step where the video changes, like resizing to change the video frame to different resolutions. At this point, the video is still uncompressed.

Re-encoding

With the desired modifications applied, the video data is compressed and re-encoded using a chosen codec at the target bitrate and resolution. This creates a new rendition of the video stream optimized for a specific audience segment.

Muxing

Finally, the file is reassembled with the video and audio streams and any metadata that needs to be attached.

Manifest File Creation

If you remember, we created video segments earlier in this process to help with ABR. The only problem is, how does a video player keep track of so many different segments?

The answer is the manifest file.

After transcoding the video file, but before repackaging it, the system creates a manifest file to map out all the different video segments of each bitrate and resolution. This helps the video player and server track which segment to deliver and stream next.

The system creates manifest files (also known as playlist files) in the .m3u8 format. There can be a single primary manifest file or two manifest files—the primary manifest and the media manifest, mapped out to the master manifest file.

Primary Manifest

This is the first file that the video player requests. The primary manifest contains information about all the possible video streams available. It lists the video resolution of the streams, the bandwidth required to stream them, and the decoder required for compatible playback. In the case of only a primary manifest, it also contains information about the segments.

Media Manifest

The media manifest contains information about the duration and the URL of each playback segment.

There can be other types of manifest files for different streaming protocols, such as Media Presentation Documentation (MPD) in the case of MPEG-DASH.

Progressive Delivery to CDN

After transcoding and segmentation, the video stream is typically packaged using a container format like MPEG-TS (Transport Stream). This format efficiently organizes the video segments, manifest files, and other metadata into a single streamable unit. This is then sent to an origin server, which acts as a central repository to store the data. However, it only stores this data and doesn't stream it directly to users.

The origin server transmits the packaged stream content to edge servers using protocols that are optimized for efficient delivery of live streaming data, like HTTP Live Streaming (HLS).

Caching on Edge Server

As edge servers start receiving data from the origin server, they utilize various caching mechanisms to store the content locally. These mechanisms can involve caching entire segments, specific data chunks within segments, or using techniques like byte-range requests to efficiently serve only the necessary data for each viewer.

Since live streams are constantly updated with new segments, there are often mechanisms in place to invalidate outdated content from the edge server cache, like Time-To-Live (TTL) values or invalidation messages from the origin server. This ensures viewers receive the latest version of the stream.

Video Delivery

As the viewer loads up a live video stream on their player, it requests the manifest file from the CDN. The video player evaluates the size of the playback window and the network speed to determine the appropriate quality of the video rendition it needs.

Once the video player determines the requirements, it requests the desired video segment from the CDN. The CDN reroutes this request until it reaches the closest geographic edge server, which then establishes a persistent TCP connection to the viewer to ensure the continuous delivery of the stream without any stopgaps.

The video player will usually look up the appropriate sequence of the video segments and request two or three of them at a time from the edge server. Upon receiving this request, the edge server checks if it has that particular video segment available. If the segments are found in the cache (a "cache hit"), it served to the viewer. If it doesn't (a "cache miss"), it requests it from the origin server.

Decoding and Playback

Once the video player receives the video segments, it uses the appropriate decoder (based on the chosen codec specified in the manifest file) to convert the compressed video data back into a streamable format.

As the stream starts, the player assembles and plays back the segments in the appropriate order listed in the manifest file. The video player makes sure it has a store of the segments it received. As playback progresses, the player decodes and displays video frames from the buffer. It also requests new segments from the CDN edge server to keep a buffer ahead of playback.

When the internet connection quality changes, the player requests higher or lower bandwidth segments from the edge server. Of course, playback can sometimes have errors, and the stream can be lost in transit. When that happens, the player re-requests and re-delivers it from the edge server.

How Does Live Streaming Work on Live Streaming Platforms?

We've unpacked the technical journey of how a live stream works from start to end. If you're still with us, we just need to go over the last piece of the puzzle, which is the live streaming platforms. But what are live streaming platforms? And how does live streaming work with them?

Live streaming platforms simplify broadcasters' workflows by providing a user-friendly interface for managing streams and interacting with viewers. These platforms offer features for scheduling live streams in advance and promoting them to generate anticipation among viewers.

What's more, a live streaming platform offers interaction and audience engagement using interactivity options like live chat, FAQs, Q&As, and social media feeds. This allows viewers to participate and actively ask questions in real time.

Besides this, these platforms also come with analytics to track viewer data during the live stream. This gives organizations data to monitor and refine their content strategies.

Lastly, live streaming platforms make content sharing a breeze. Features like social media sharing buttons allow broadcasters to easily promote their live streams across various platforms. This helps them expand their reach and attract new viewers.

How AI Is Changing Enterprise Live Streaming in 2026

Live streaming was once a logistical challenge: get video from a camera to an audience without the stream dropping. In 2026, AI capabilities embedded in enterprise streaming platforms are changing what is possible before, during, and after a live event, automating work that previously required production staff, reducing post-event processing time, and turning a one-time broadcast into a permanent, searchable content asset.

Real-Time Transcription and Captioning

The most immediate AI application in enterprise live streaming is automated captioning. Rather than requiring a human stenographer or waiting for post-event processing, AI transcription engines can generate captions with under five seconds of latency from spoken word to on-screen text.

For enterprise organizations, real-time captions address three distinct needs simultaneously:

- Accessibility compliance: ADA Section 508 and WCAG 2.1 AA require captions for live video in regulated industries and government contexts. Automated live captions eliminate the cost and scheduling overhead of human CART captioning for internal events.

- Global workforce inclusion: Captions in the viewer's language extend live events to employees who may not be native speakers of the presenter's language. EnterpriseTube's AI transcription supports 82 languages, enabling live captions across global teams from a single broadcast.

-

Searchable VOD: When the live stream ends and converts to on-demand video, the transcript is immediately indexed. The entire event becomes searchable by keyword within minutes of broadcast completion, no manual tagging required.

AI-Generated Post-Stream Summaries and Clips

After a broadcast concludes, AI processing can automatically generate:

- A structured summary of the session (agenda items covered, key announcements, action items mentioned)

- Topic-based chapter markers, so viewers can jump directly to the segment relevant to them in the VOD

- Highlight clips identified from engagement signals, moments where viewer attention peaked or chat activity spiked

This directly addresses why most recorded live events go unwatched. A 90-minute all-hands that covers compensation updates, product roadmap, and Q&A does not need to be watched in full by every employee. A 200-word AI summary with three timestamp links changes the completion rate for that content.

AI Moderation for Large-Scale Live Events

Large enterprise broadcasts, company-wide all-hands, public webinars, live training sessions, often involve hundreds or thousands of simultaneous viewers, along with chat, Q&A, and audience interaction. AI moderation can:

- Filter inappropriate or off-topic chat messages in real time, before they display to the audience

- Flag sensitive information inadvertently visible on screen, such as PII or confidential documents using object detection

- Blur faces in broadcast feeds where privacy compliance requires anonymization

For organizations in regulated industries, automated moderation during live events reduces the risk of compliance incidents that would otherwise require dedicated human monitoring at scale.

AI-Assisted Q&A and Audience Engagement

When hundreds of questions arrive in a live Q&A queue during a large event, the traditional approach, manually reviewing and selecting questions, does not scale. AI can:

-

Group similar or duplicate questions automatically, surfacing a consolidated version to the moderator

-

Rank questions by audience engagement signals (upvotes, frequency of similar submissions)

- Suggest poll questions in real time, based on the transcript content, that a moderator can push to the audience in one click

This shifts the moderator's role from triage to curation, they are choosing from a ranked, deduplicated list rather than processing raw volume.

From Live Event to Permanent Content Asset

The most significant AI-driven shift in enterprise live streaming is not what happens during the broadcast, it is what happens after. Without AI, a live stream produces a recording: a video file that requires manual captioning, summarizing, tagging, and redistribution. With AI processing, the same broadcast automatically produces:

- A fully captioned, searchable VOD available within minutes of stream end

- A structured transcript with speaker labels

- Timestamped topic chapters visible in the player's progress bar

- A shareable written summary for employees who cannot watch the full event

For enterprise organizations running dozens of live events per month, training sessions, team broadcasts, customer webinars, product demos, this eliminates hours of post-production work per event and ensures that every broadcast contributes to an organized, discoverable video library rather than a growing archive of unwatched recordings.

How EnterpriseTube Handles Live Streaming for the Enterprise

Live streaming for an enterprise audience is a different problem than live streaming for the open internet. Internal town halls reaching thousands of employees can saturate the corporate WAN without an enterprise content delivery network. Compliance training requires authentication, audit logs, and completion tracking that consumer live streaming tools do not provide. Recordings need to land in a searchable, governed library, not a folder somewhere.

EnterpriseTube ingests live video over RTMPS, transcodes feeds into adaptive bitrate renditions in real time, and distributes through both a public CDN (for external viewers) and a P2P enterprise content delivery network (for internal corporate distribution). Recording happens automatically. Once the event ends, AI processes the recording for transcription in 82 languages, automatic chaptering, summarization, and object detection, and the on-demand replay is searchable from the moment processing finishes.

Deployment options include SaaS, dedicated cloud, on-premises, hybrid, and government cloud, which matters for regulated industries that cannot run video traffic through commercial cloud infrastructure. Authentication runs through SSO via SAML 2.0, OAuth 2.0, or OpenID Connect with any major identity provider, and granular access controls (RBAC, time-limited links, SSO-driven group permissions) keep internal events internal.

Ready to take your live streaming efforts to the next level? Sign up for a 7-day free trial, or contact us to learn more.

Key Takeaways

- Live streaming is the real-time broadcast of video and audio over the internet through a pipeline that includes capture, encoding, segmentation, transcoding, CDN delivery, and decoding on the viewer's device. Each step has to function correctly in sequence, or the stream fails.

- RTMP is still the dominant ingest protocol for enterprise live streaming in 2026. Flash Player was retired in 2020, but RTMP outlived it. The protocol moved from delivery to ingest and remains the ecosystem standard for encoders.

- HLS and DASH are the dominant delivery protocols. The streaming platform packages content in both formats and delivers whichever the viewer's player requests. Cross-browser support is no longer a meaningful constraint on either.

- Adaptive bitrate streaming and content delivery networks are what make live streaming work at scale. ABR adjusts video quality to match each viewer's network conditions, and CDNs reduce the distance video data has to travel to reach the viewer.

- Enterprise live streaming requires capabilities that consumer live streaming tools don't provide: SSO authentication, an eCDN for internal corporate distribution, compliance audit logs, AI transcription and captioning, and recording into a governed library that survives the live event.

- AI is changing what enterprise live streaming produces. Beyond the broadcast itself, automated transcription, chaptering, summarization, and content indexing turn every live event into a permanent, searchable asset within minutes of the stream ending.

People Also Ask

Live streaming works by capturing raw video through a camera, encoding it into a compressed format, sending it through a streaming server and CDN, and finally decoding it on the viewer’s device for near real-time playback. Each step ensures smooth delivery even over varying internet speeds.

Live streaming works on most devices and browsers by using protocols like HLS or MPEG-DASH. These protocols package video into small segments that modern devices can decode, allowing seamless playback on phones, laptops, tablets, and smart TVs.

Adaptive bitrate (ABR) streaming works by creating multiple versions of the live stream at different resolutions and bitrates. The video player automatically switches between these based on the viewer’s internet speed to prevent buffering and maintain smooth playback.

A CDN is important for live streaming because it caches video segments on edge servers close to the viewer’s location. This reduces latency, minimizes buffering, and ensures reliable playback even for global audiences.

Live streaming requires an audio/video source, an encoder, a streaming server, a CDN, and a live-streaming platform. Each component handles a specific stage of processing and delivery to ensure a stable, high-quality stream.

Encoding compresses raw video into a digital format suitable for online delivery. It reduces file size, improves compatibility with devices and browsers, and prepares the stream for efficient distribution through a CDN.

Yes, live streaming can work with limited bandwidth by using adaptive bitrate streaming. The player automatically lowers video quality when bandwidth drops to prevent interruptions or playback stalls.

Enterprise platforms like EnterpriseTube streamline how live streaming works by integrating encoders, CDNs, and player technology into one system. They also provide interactivity, analytics, authentication, and secure delivery features tailored for organizational use.

Live streaming broadcasts content in real time as it is captured, while on-demand video is pre-recorded and played at any time. Live streaming enables immediate interaction and real-time engagement with viewers.

Live streaming reaches global audiences through CDNs that distribute video segments to edge servers worldwide. By serving the stream from a nearby server, the system minimizes latency and ensures consistent quality for viewers regardless of location.

Live streaming is a one-to-many broadcast: one or a small number of presenters transmit to a large audience that primarily watches and interacts through chat or Q&A. Video conferencing is a many-to-many communication format where all participants can see and speak to each other simultaneously. The distinction matters for enterprises: video conferencing tools (Teams, Zoom, Webex) are designed for meetings of dozens to hundreds of participants.

Adaptive bitrate (ABR) streaming encodes the live stream at multiple quality levels simultaneously, for example, 1080p at 6 Mbps, 720p at 3 Mbps, 480p at 1.5 Mbps, and 360p at 800 Kbps. The stream is segmented and all quality levels are made available at the CDN edge. The viewer's player continuously monitors available download bandwidth and automatically switches to the highest quality level the connection can sustain without buffering. If a viewer's connection degrades mid-stream, the player switches to a lower quality tier in real time, typically within one or two segments, rather than stopping playback.

About the Author

Rafay Muneer

Rafay Muneer is a Senior Product Marketing Strategist at VIDIZMO with deep expertise in data protection, AI redaction, and privacy compliance. He covers how public safety agencies, legal teams, and enterprise organizations build defensible, technology-driven approaches to sensitive data management.

Jump to

You May Also Like

These Related Stories

Top Live Streaming Platform Features to Consider in 2025

Top 6 CDN Providers for Live Enterprise Video Streaming in 2025

No Comments Yet

Let us know what you think